And we back. Welcome to Data Science Some Days. A series where I go through some of the things I’ve learned in the field of Data Science.

For those who are new… I write in Python, these aren’t tutorials, and I’m bad at this.

When I’m cooking, sometimes I’m too nervous to taste the food as I’m going along. It’s like, I must think that I’m being judged by my guests over my shoulder.

That’s a rubbish approach because I might reach the end and not realise that I’ve missed some important seasoning.

So, if you want a good dinner, it’s important you just check as you’re going along.

This analogy doesn’t quite work with what I’m about to explain but I’ve committed. It’s staying.

Cross-Validation

The first introduction to testing is usually just an 80/20 split. This is where you take 80% of your training data and use that to train your model. The final 20% is used for validation. It comes in the following form:

X = training_data

y = training_data["prediciton target"]

X_train, X_valid, y_train, y_valid = train_test_split(X,y,train_size=0.8,test_size=0.2) This is fine for large data sets because the 20% you’re using to test your model against will be large enough to offset the random chance that you’ve just managed to pick a “good 20%”.

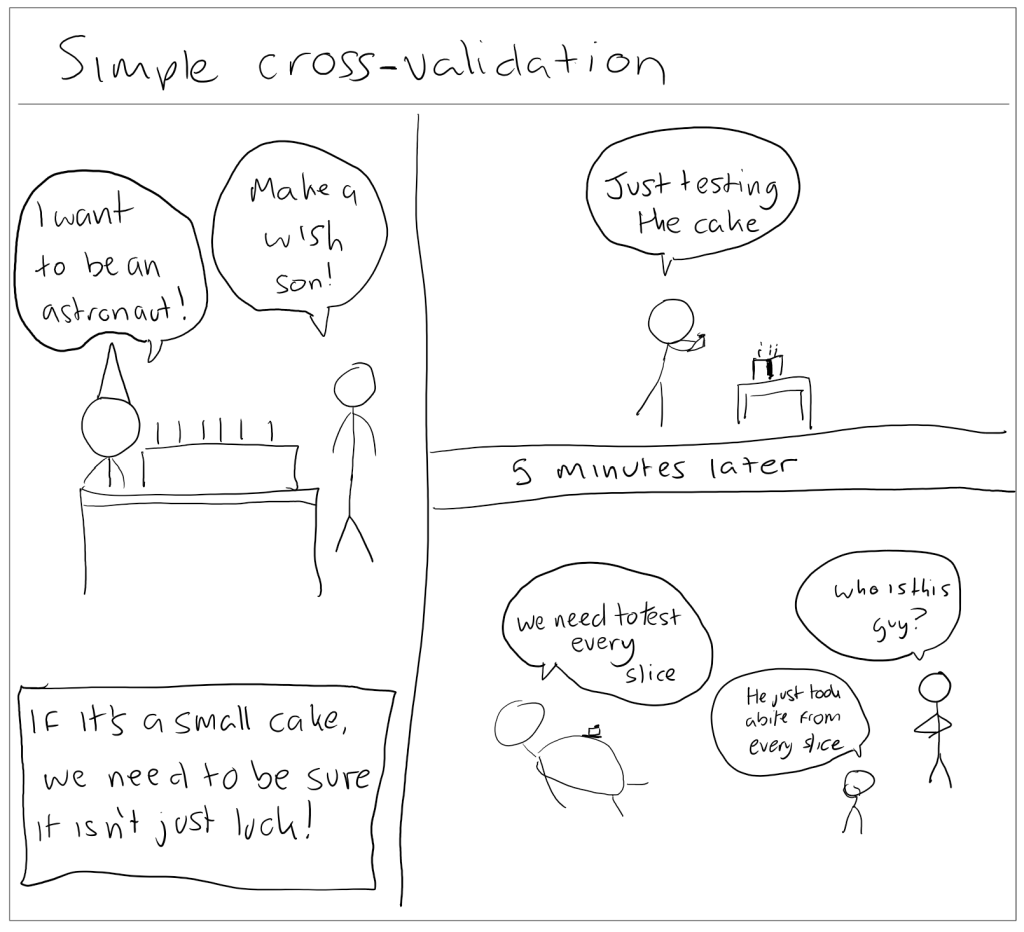

With small data sets (such as the one from the Housing Prices competition from Kaggle), you could just get lucky with your test split. This is where cross validation comes into play.

This means you test your model against multiple different subsets of your data. It just takes longer to run (because you’re testing your model many different times).

It looks like this instead:

# cross_val_score comes from sklean.model_selection.

# The pipeline here just contains my model and the different things I've done to clean up the data

# You multiply by -1 because sklearn uses "higher number is better" and this allows consistency

scores = -1 * cross_val_score(my_pipeline, X, y,

cv=5,

scoring='neg_mean_absolute_error')“cv=5” determines the number of subsets (or better known as “folds”).

The more you use, the smaller the amount of data you use per test. It might take longer but with small data sets, the difference is negligible.

It’ll spit out a list of scores, then you can just average it to get the best model.

Conclusion

Cross validation is a helpful way to test your models. It helps reduce the chance that you simply got lucky with the validation portion of your data.

With larger data sets, this is less likely though.

It’s important to keep in mind that cross validation increases the run time of your code because it runs your model multiple times (one on each fold for example).

Also, I’m aware that my comic doesn’t make that much sense but it made me laugh so I’m keeping it.

Further reading

- Cross-validation | Kaggle Intermediate Machine Learning – Alexis Cook